Load packages

library(tidyverse)

library(MASS)Motivation

It is well-known that the notorious (Pearson’s) correlation cannot exceed an absolute value greater than 1, that is

or

However, proofing this fact is less straightforward. A classical way of proofing the above inequality is by using the Cauchy-Schwarz inequality. From a teacher’s perspective, the CS inequality may not be ideal, because the students may lack some knowledge necessary for appreciating this proof. In order to provide teachers’s (or anyone else for that matter), this posts provides an alternative way, one that does not demand much more than basic algebra and some knowledge about descriptive statistics (particularly including z-scores and correlation). This posts builds on this paper.

Definitions

Assume there are two sets of measurements (values), where and denote the measurement, . The (empirical) correlation can then be defined as

where denotes the standard deviation of , and the covariancee of and .

Let’s denote with (and in the obvious way).

Note the analoguous definition of and :

Hence

In other words: The variance equals the covariance if we let the second equal .

As the z-score is defined as

we may also define as

Intuition about the magnitude or

Let’s simulate some correlated data.

d <- mvrnorm(n = 100, mu = c(0,0) , Sigma = matrix(c(1, 0.7, 0.7, 1), nrow = 2), empirical = TRUE)

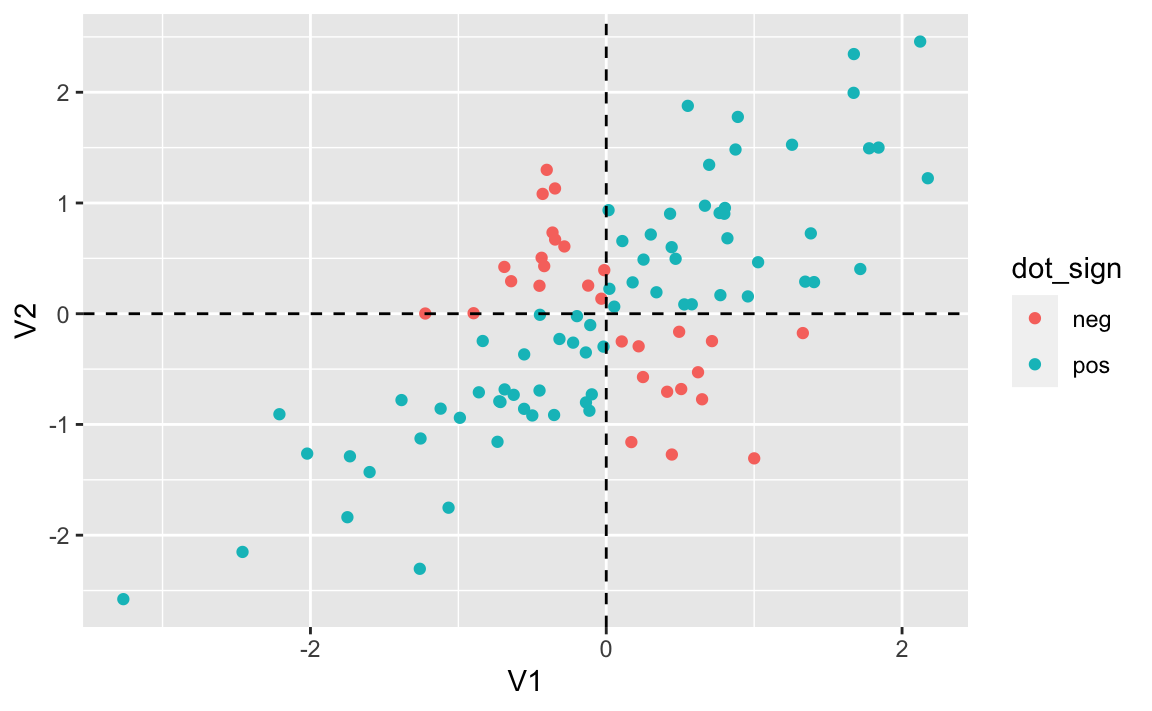

d <- as_tibble(d)Let’s color the dots with respect of the sign of the product of and .

d <- d %>%

mutate(dot_sign = ifelse(V1*V2 > 0, "pos", "neg"))ggplot(d, aes(V1, V2, color = dot_sign)) +

geom_point() +

geom_vline(xintercept = mean(d$V1), linetype = "dashed") +

geom_hline(yintercept = mean(d$V1), linetype = "dashed")

We see four “regions”, two with positive and two with negative sign.

Observe that in the two “positive” regions the product of V1 and V2 is positive, and in the two negative regions, their product is negative.

Some properties of z-scores and their sums and products

Further note that - as squares cannot be negative - this term must be nonnegative:

for each .

Similarly,

Read the above equation as “the average squared sum of two z-scores must be nonnegative.”

Now multiply out the binomial part of the last step:

If we change the plus sign in eq. 3 into a minus sign, we get . In sum:

Hence, we have found that the cannot exceed an absolute values of 1.